Guten Tag!

Localization is something that a robot does in order to try and find where it is in a given area.

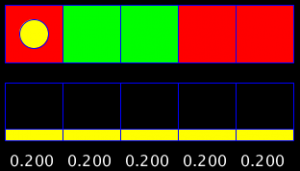

The Processing sketch below illustrates a localization method for a one-dimensional grid. Before seeing the sketch in action, you need to know a few things about it. The sketch tries to determine probabilistically where the robot is in the world. The world consists of a linear cyclic world containing coloured cells that can either be red or green and the robot can “see” what colour it is standing on by using its on-board camera. The robot has the ability to move to the left and to the right. After moving and sensing a few times the robot should have an idea based on probability of where it is in the world.

Right that’s it! Below are some usage instructions with the sketch below that. Try it out.

Instructions for use:

The world begins with the robot positioned in the first cell, all the cells are coloured red and the robot is in an initial state of maximum confusion (it’s totally lost).

The user can change the colour of the cells by clicking on them. Left click for red and right click for green.

There are then four buttons in the sketch. Two of them issue commands to move the robot (the yellow circle) either one cell to the left or one cell to the right. The other two buttons simulate the feedback from the colour sensor on the robot. One of the two simulates the robot sensing red and the other simulates the robot sensing green.

This of course allows you to simulate receiving false information from a robot’s sensors (robot can be on a green square when the user clicks “Sense Red”).

All these commands then affect the probability of the robot being at a certain point in the world. These are the numbers shown beneath the coloured cells. The higher the probability of a cell, the bigger the belief that the robot is currently at that specific cell in the world.

Right at the bottom of the sketch there are numbered buttons that allow you to change the number of cells in the world. Each time the number of cells changes the world resets (robot is lost again).

So, after moving and sensing a few times, the robot has a fairly good idea of where it is in the world!

It achieves this by implementing a surprisingly simple algorithm. We will look at this algorithm in more detail below.

What does the robot need in order to be able to do this? For starters, it needs a map of the world or area that it is in. It would not be able to localize without this on-board map.

It also needs to of course be able to move to the left and to the right and be capable of sensing the colour that it is standing on.

Furthermore it needs to store the probability of where it is on the map it has. Each cell in the world needs to be allocated a value that represents the probability that the robot is occupying that cell. Due to the fact that the robot starts out not knowing where it is, every cell’s initial probability should be the same value as every other cell’s value. This makes sense if you think about it this way: The robot has no idea where it is so therefore there is an equal chance that the robot could be on any cell in the world.

These probabilities should be normalized (all the probabilities added together should add up to 1) to make them easier to work with and easier to visualise.

Below is an example of what this would look like in a 5 cell world:

And here in a 7 cell world:

Now what happens to these probabilities when a colour is sensed?

The cells with colours that match the the colour being sensed have their probability values multiplied by 0.6.

The cells with colours that do not match the colour being sensed have their probability values multiplied by 0.2.

This results in an increased probability of being on the cells whose colours match what was sensed in relation to cells with colours that do not match what was sensed. This makes sense! If you sense red, there is a higher probability that you are on a red cell than on a green cell!

Why not just maximise the probabilities for cells matching the colour sensed and minimise the values for cells with non-matching colours though?

This is because we are working with (or planning to work with) real-life hardware. Unfortunately in the real world, things don’t always go to plan (shocker I know). Especially when working with electronic hardware, things can and do go wrong. The colour sensor may malfunction for example, reading the colour red instead of green or vice-versa and if the multiplication values are too extreme, a single bad reading could completely throw your robot off and it could end up with irreversibly incorrect probabilities! Your robot will be doomed to suffer eternal “lostness”!

“Fun fact” – lostness is not a real word!

Now, what happens to the probabilities when the robot is moved? As you have likely seen from moving the robot around in the sketch, the probabilities move with the robot. i.e. If the robot moves one cell right, all probabilities move one cell to the right. This is known as exact motion. It is assuming that if a move command is issued to the robot that the robot will successfully execute the command (can you see where I am going with this?). Once more we need to think about what would happen in a real life scenario with a physical robot interacting with the world. The robot could try to move and have the wheels slip and as a result not move as far as it planned to. The robot could have an issue with it’s wheel encoders and end up overshooting it’s target. These are things that our code should cater for. Enter – inexact localization.

The sketch below implements inexact localization. Try it out and see how different it is.

So, what is happening here exactly? The program is now catering for overshoot and undershoot. It does this by estimating a likelihood that the robot will overshoot and undershoot and then allocating percentages to those likelihoods.

It allocates 10% to overshoot, 10% to undershoot and the remaining 80% is how sure the system is that when a command is issued the robot will reach the intended destination.

So what does this mean for our probabilities?

For each and every probability, 80% of its value is sent to the target cell’s probability. 10% of its value is sent to the following cell in the direction of intended movement (overshoot) and 10% stays where it is (undershoot).

The picture below depicts what the process is like for attempting to move one cell to the right:

The values in the bottom cells are then simply summed up and those values are the new probability values for every cell. This results in the system being able to deal with erroneous data from the sensor every now and then. If the sensors are completely broken then of course it does not matter how fantastic your code is, the system will still fail to localize.

This is such a simple and powerful algorithm that allows your robot to do something amazing. This is only the beginning of localization though (one-dimensional). Think about how something like the Google self-driving car localizes. Believe it or not it does essentially the same thing that we are doing here – just with a far bigger budget 😉

I’m going to leave you with a few things to try out in the sketches. See if you can understand what happens when you do the actions and try to state why you think it happens.

Things to try:

Try issuing various move-sense commands and observe how the probabilities change.

Try doing an initial sense and then moving multiple times without sensing. What happens to the probabilities when you keep on moving without sensing? Why do you think this happens?

What happens when the robot stands on one spot and keeps sensing a single colour?

Change the number of cells in the world. How does that affect things?